“Most SEO engagements measure AI citations like clicks. They assume a citation today is a citation next month. Half of them are gone inside five weeks.”

~ Cody C. Jensen, CEO & Founder, Searchbloom

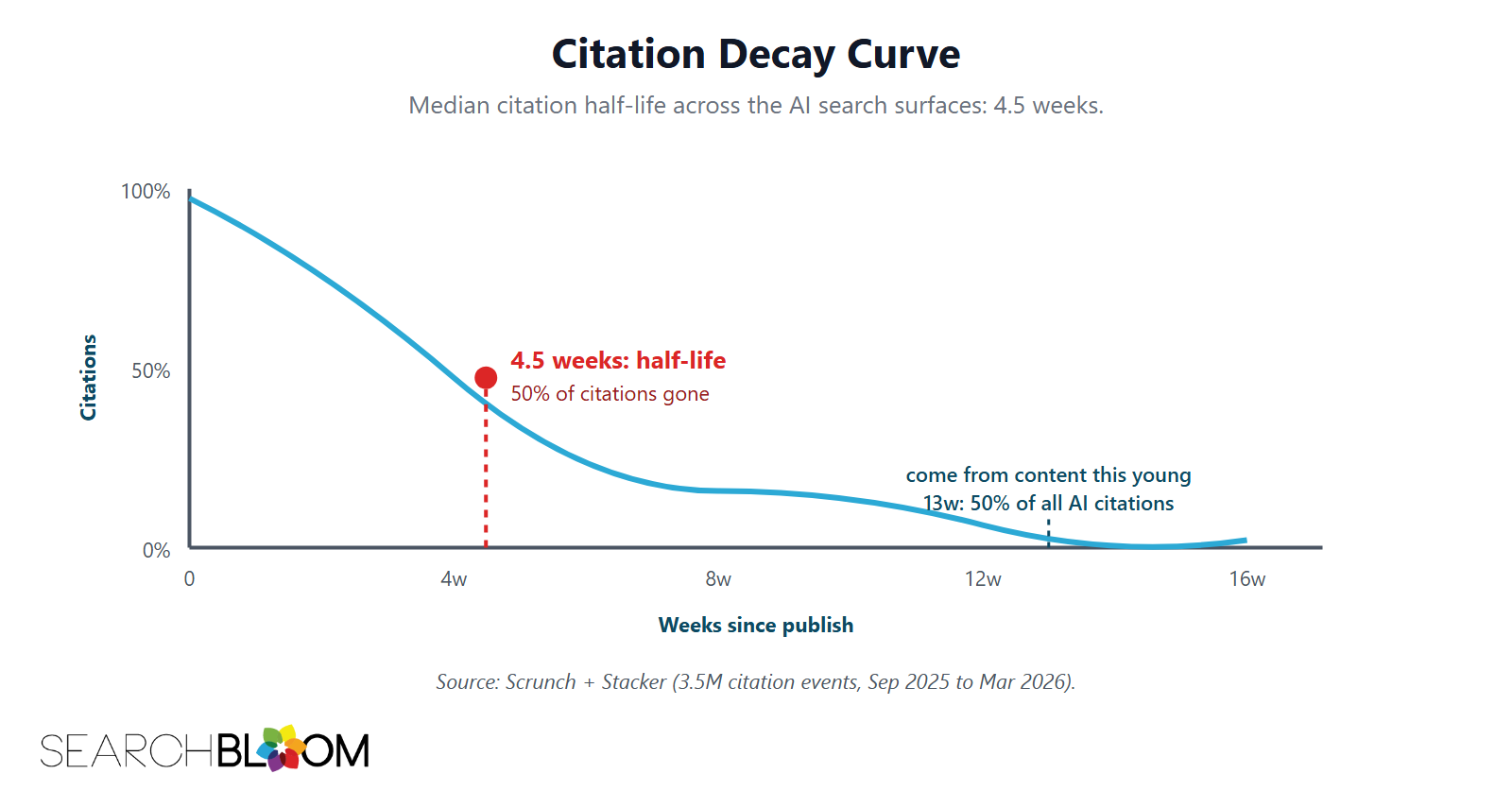

Citations on AI search surfaces are not forever. The median time for half of a piece of content’s AI citations to drop off is 4.5 weeks. Cite the Scrunch + Stacker dataset of 3.5 million citation events from September 2025 to March 2026, and the picture is clear. Citations decay fast. Most SEO engagements do not plan for that. Treat this as a benchmark to reproduce, not a fixed constant. Citation visibility is a distribution measured over repeated sampling, so confirm the half-life on your own priority set with longitudinal tracking before planning against it.

This piece names the decay pattern, breaks it down by content type and Information Gain Score grade, and lays out a refresh playbook that scales the response to the content. The closest mental model is fresh produce. Some content has a long shelf life. Some content goes bad inside a month. The discipline is knowing which is which and pricing the refresh work into the program.

The number itself is recent. Until late 2025, nobody had a clean longitudinal dataset of AI citation events. The Scrunch + Stacker study and a parallel report from AuthorityTech are the first wave of empirical work on this. Both studies converge on the same finding: citation half-lives are short, and most SEO engagements are pricing them too long.

TL;DR

- Citation Half-Life is the time for half of a piece of content’s AI citations to drop off. Industry median is 4.5 weeks. Half of all AI citations are on content less than 13 weeks old.

- Decay rates vary by platform. ChatGPT cites content under three months old most often. Perplexity runs on a longer six-week cycle for many topics. Google AI Overviews sit in between, with about a monthly refresh cycle.

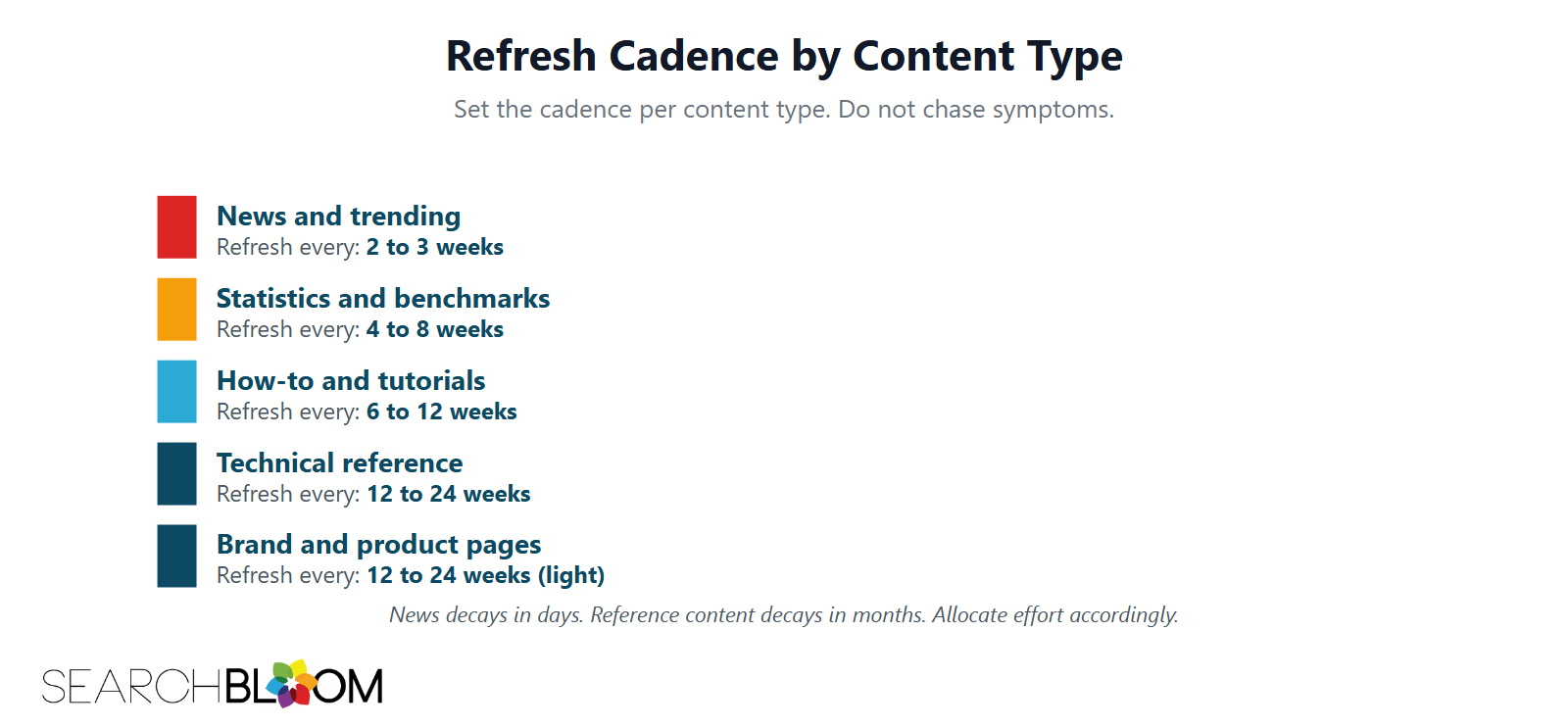

- Decay rates vary by content type. News and trending content decays in days. Technical reference content decays in months. Brand pages decay slowest.

- Decay correlates with Information Gain Score grade. Higher IGS grades hold citations longer. The substance that earned the citation is also what keeps it.

- The refresh playbook scales with content type. News-and-trending: refresh every 2 to 3 weeks. Technical reference: every 6 to 12 weeks. Evergreen brand pages: every 3 to 6 months.

Why Citation Half-Life Matters

Most SEO engagements report on citations the way they used to report on rankings. A page got cited in an AI Overview last week. The slide deck shows the citation. The slide deck stays current for a quarter. By the time the quarterly review lands, the citation is gone and nobody noticed.

The mismatch between citation half-life and reporting cadence is a measurement problem. A quarterly report on AI citations is reading a snapshot that, on average, is two refresh cycles old. Half the cited pages have lost the citation already. The report misrepresents the program’s current state.

A second mismatch is on the content side. Programs build a piece of content once. The launch hits AI citation surfaces. The team moves on. Six weeks later, the citation is gone and no one ran the refresh. The content sits at its launch state while the citation curve crashes around it.

Pricing the half-life into the program is the fix. Refresh cadence becomes a line item. Specific content types get specific cadences. Reporting changes from a quarterly snapshot to a weekly or biweekly trend.

The Industry Data

Two studies set the working numbers. Scrunch + Stacker, published in March 2026, analyzed 3.5 million citation events across ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude from September 2025 to March 2026. The headline finding: the median half-life across the dataset is 4.5 weeks.

The second is AuthorityTech’s freshness study, published in February 2026. It finds 50 percent of AI citations come from content less than 13 weeks old. Content updated within three months gets cited roughly twice as often as outdated content.

A third reference point comes from Rank.bot’s analysis in late 2025: content under three months old is three times more likely to be cited than older content. The three sources converge on the same shape: fresh content gets cited disproportionately, and the citation drop-off is steep.

The 4.5-week median is the load-bearing number. Inside that median sits a wide range. News and trending content decays in days. Technical references decay over months. The next sections break the variance down.

Decay Rates by Platform

Citation cycles vary by AI surface. The three big surfaces in 2026 are ChatGPT, Google AI Overviews and AI Mode (where this reflects normal index freshness on Google’s core ranking, not a separate AI lever), and Perplexity.

ChatGPT runs on the shortest refresh cycle. The Scrunch + Stacker data shows ChatGPT citations skew strongly toward content under three months old. Bi-weekly content refreshes drive the highest citation lift. The platform treats freshness as a primary signal.

Google AI Overviews and AI Mode sit in the middle. Citations skew toward content under three to six months old. Monthly refresh cycles align with how often Google’s index re-indexes priority pages. AI Mode in particular runs query fan-out (9 to 11 sub-queries per user query) and pulls fresher sources for the time-sensitive fan-out branches.

Perplexity runs on a longer cycle. Many topics show citation patterns where six-week-old content is still active. Perplexity’s retrieval surface emphasizes authoritative sources alongside fresh ones, which extends the half-life of strong content.

The platform variance has a working implication. A program running across all three surfaces needs at least two refresh cadences. Bi-weekly for ChatGPT-priority content. Monthly for the broader set. The full 6-week cycle that works for Perplexity-only is too slow for ChatGPT-heavy programs.

Decay Rates by Content Type

Inside each platform, content type shifts the half-life range substantially.

News and trending content has the shortest half-life. Citations on news articles often die inside a week. The window is short by design. Fresh news displaces old news on every query.

Statistics, benchmarks, and data round-ups decay on a one-to-three-month cycle. Updated stats get cited. Two-year-old stats get cited only as a footnote, if at all. A blog post titled “X Statistics for 2026” loses citation share fast when 2027 rolls around.

How-to and tutorial content decays on a three-to-six-month cycle. The procedure is durable. The references to specific tools, versions, and APIs are not. A tutorial that referenced an older model in 2024 reads as dated by 2026. Citations follow the version drift.

Technical reference content (definitions, frameworks, deep explainers) decays slowest, on a six-to-twelve-month cycle. The concept does not move. The retrieval system still finds it relevant. Pages of this type that earned a citation tend to hold it through multiple refresh cycles.

Brand and product pages are a separate category. Citation patterns on brand pages correlate more with brand mention volume in external sources than with on-page freshness. The half-life is long when the brand has steady external mention flow and short when external mentions stall.

Citation Half-Life by Information Gain Score Grade

A Searchbloom hypothesis, supported by partner-program data over six months, is that citation half-life correlates with Information Gain Score grade. Substance that earned the citation is what keeps it.

Working bands:

| IGS Grade | Typical Citation Half-Life | Refresh Cadence |

|---|---|---|

| A (over 0.75) | 12 to 16 weeks | Every 3 to 4 months |

| B (0.60 to 0.75) | 8 to 12 weeks | Every 2 to 3 months |

| C (0.45 to 0.60) | 4 to 8 weeks | Every 6 to 8 weeks |

| D (0.30 to 0.45) | 2 to 4 weeks | Every 2 to 4 weeks if worth refreshing |

| F (under 0.30) | Under 2 weeks | Refresh or reallocate |

Working anchor points, not universal numbers. The actual half-life inside each band varies by content type, platform mix, and topical cluster. What stays consistent is the steep monotone relationship. Higher IGS pages keep their citations longer because the substance is harder to copy and the citation rationale persists.

The grade-to-half-life relationship has an operational implication. A-tier and B-tier pages are the highest-impact refresh targets, because each refresh hour buys longer citation life. D-tier and F-tier pages cost the same hour and buy a few weeks. Allocate refresh effort by grade.

The Refresh Playbook by Content Type

Refresh effort comes in three sizes. Light refresh: 30 to 60 minutes per page. Medium refresh: half a day. Heavy refresh: a full editorial cycle.

News and trending content (light refresh). Add the most recent data point. Update the dateline. Re-pull the top-10 SERP and check whether the current peer set has shifted. Re-publish.

Statistics and benchmarks (medium refresh). Pull current numbers from primary sources. Update the chart or table. Add a “what changed” note that explains the delta from last refresh. Re-run an Embedding Audit to confirm IGS holds.

How-to and tutorial content (medium refresh). Verify each tool reference is current. Update version numbers and API references. Test the procedure once. Update screenshots if interfaces changed. Re-publish.

Technical reference content (heavy refresh). Add a new section that scopes any new dimension that entered the cluster. Update the diagrams. Re-run the IGS audit against the current top-10. Update the FAQ block. Re-publish.

Brand and product pages (light refresh on cadence, heavy refresh on launch). Light refresh updates pricing, customer count, or specific feature mentions. Heavy refresh happens on a product launch or rebrand. The cadence is steady but light.

The total refresh cost on a 50-page program runs about 30 hours per month at the working cadences. That is the line item AI search optimization adds to the marketing budget. It is not optional.

Five Failure Modes

Quarterly reporting on weekly citations. A quarterly slide deck on AI citation share is reading a snapshot two refresh cycles old. The deck makes the program look more stable than it is. Stakeholders draw the wrong conclusions about what is working.

Refreshing on symptoms. A page lost its citation. The team rushes to refresh. By the time the refresh deploys, the citation cycle has moved on to other pages. Reactive refresh is always behind the curve. Scheduled refresh on cadence beats it every time.

One refresh cadence for all content types. A program that refreshes every page every quarter under-serves news content and over-serves technical reference. Different content types need different cadences. Force-fitting one rhythm wastes effort on stable pages and misses citation windows on time-sensitive pages.

No refresh budget. Programs that build content but do not price refresh hours into the program create a steady decay curve. Citations fall. The program looks like it is failing because it is. Better content does not fix it. A refresh line item does.

Refresh that swaps the date but not the substance. Changing the published date and a few sentences fools nobody. Retrieval systems look at content vectors and entity adjacency, not on-page date metadata. A surface-level refresh is detectable. A real refresh adds substance.

Where Citation Half-Life Sits in the Framework

Citation Half-Life is a measurement concept that overlays the entire Corpus Engineering framework. It is not a specific component. It is the time dimension that runs across all six components.

Inside MERIT, Citation Half-Life sits inside Transform. Transform is the pillar that keeps and adapts the corpus over time. Citation Half-Life is the metric that tells Transform when to act.

The concept pairs with Corpus Drift. Corpus Drift is the landscape-side mechanism that erodes Information Gain Score. Citation Half-Life is the citation-side outcome that the erosion produces. The two run on different cadences. Corpus Drift runs quarterly. Citation Half-Life runs by content type and platform mix.

Citation Half-Life also pairs with the refresh side of the Embedding Audit workflow. The Embedding Audit measures IGS at publish. Citation Half-Life sets the cadence for the next audit.

Frequently Asked Questions

What is Citation Half-Life?

Citation Half-Life is the time it takes for half of a piece of content’s AI citations to drop off. Industry data puts the median at 4.5 weeks across ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude.

Where does the 4.5-week median come from?

The Scrunch + Stacker study, published in March 2026, analyzed 3.5 million citation events from September 2025 to March 2026. The median half-life across the full dataset is 4.5 weeks. AuthorityTech’s parallel freshness study finds 50 percent of citations come from content less than 13 weeks old.

How does platform affect Citation Half-Life?

ChatGPT runs the shortest cycle (bi-weekly refresh impact is strongest). Google AI Overviews and AI Mode sit in the middle (monthly cycle). Perplexity runs a longer six-week cycle for many topics.

How does content type affect Citation Half-Life?

News and trending: days. Statistics and benchmarks: one to three months. How-to and tutorials: three to six months. Technical reference: six to twelve months. Brand pages: long when external mention flow is steady.

How does IGS grade affect Citation Half-Life?

Higher Information Gain Score grades hold citations longer. A-tier pages (over 0.75 IGS) hold 12 to 16 weeks. C-tier pages (0.45 to 0.60) hold 4 to 8 weeks.

What does a refresh actually do?

A refresh updates the substance, not the date. Real refreshes add new data, update tool references, scope new adjacent entities, and confirm the IGS holds against the current top-10. Surface-level refreshes that only change the date are detectable and ineffective.

How much refresh effort does a typical program need?

About 30 hours per month for a 50-page program running mixed content types at the working cadences. The exact number scales with content mix and IGS grade distribution.

Does Citation Half-Life apply to traditional SEO?

It applies, but with a longer half-life. Traditional organic rankings decay slower than AI citations, often on a multi-month cadence. The same underlying drift mechanisms apply.

How is Citation Half-Life different from content decay?

Content decay is the broader concept. Citation Half-Life is the specific measurement: half-life of AI citations on a piece of content. Content decay drives Citation Half-Life.

Where does Citation Half-Life fit inside the MERIT Framework?

Inside Transform. MERIT names Transform as the pillar that keeps and adapts the corpus over time. Citation Half-Life is the metric that tells Transform when to refresh.

The Bottom Line

Citation Half-Life is the time for half of a piece of content’s AI citations to drop off. Industry data puts the median at 4.5 weeks. Variance by platform, content type, and Information Gain Score grade is wide. A cadence that fits one bucket is wrong for another.

Without the refresh discipline, programs build content once and watch citations decay. Quarterly reports lag the actual state by two refresh cycles. The program looks healthier than it is.

With the discipline, refresh is a line item. News-and-trending runs on a 2-to-3-week cycle. Technical reference runs on a 6-to-12-week cycle. Brand pages run on a 3-to-6-month cycle. The total cost is real and the citation curve flattens.

Cite the Information Gain Score, pair it with the half-life cadence, and the refresh hours go to the pages that buy the most citation life per hour spent.