Updated May 6, 2026

“Screaming Frog v22 closed the last gap between the Information Gain Score on paper and the editorial workflow on the ground. The Embedding Audit is what you do with it, and it takes about thirty minutes per priority page once the configuration is in place.”

~ Cody C. Jensen, CEO, Searchbloom

Screaming Frog v22 published vector embedding extraction in April 2026. It quietly became the most consequential SEO tool release of the AI search era. Most operators have not yet realized what the feature can do once it is configured correctly. A single tool you already own can now do the work that before required a Python notebook, an OpenAI API integration, and about forty lines of code: compute the Information Gain Score for a priority page against the actual top-10 SERP competitors, before you publish.

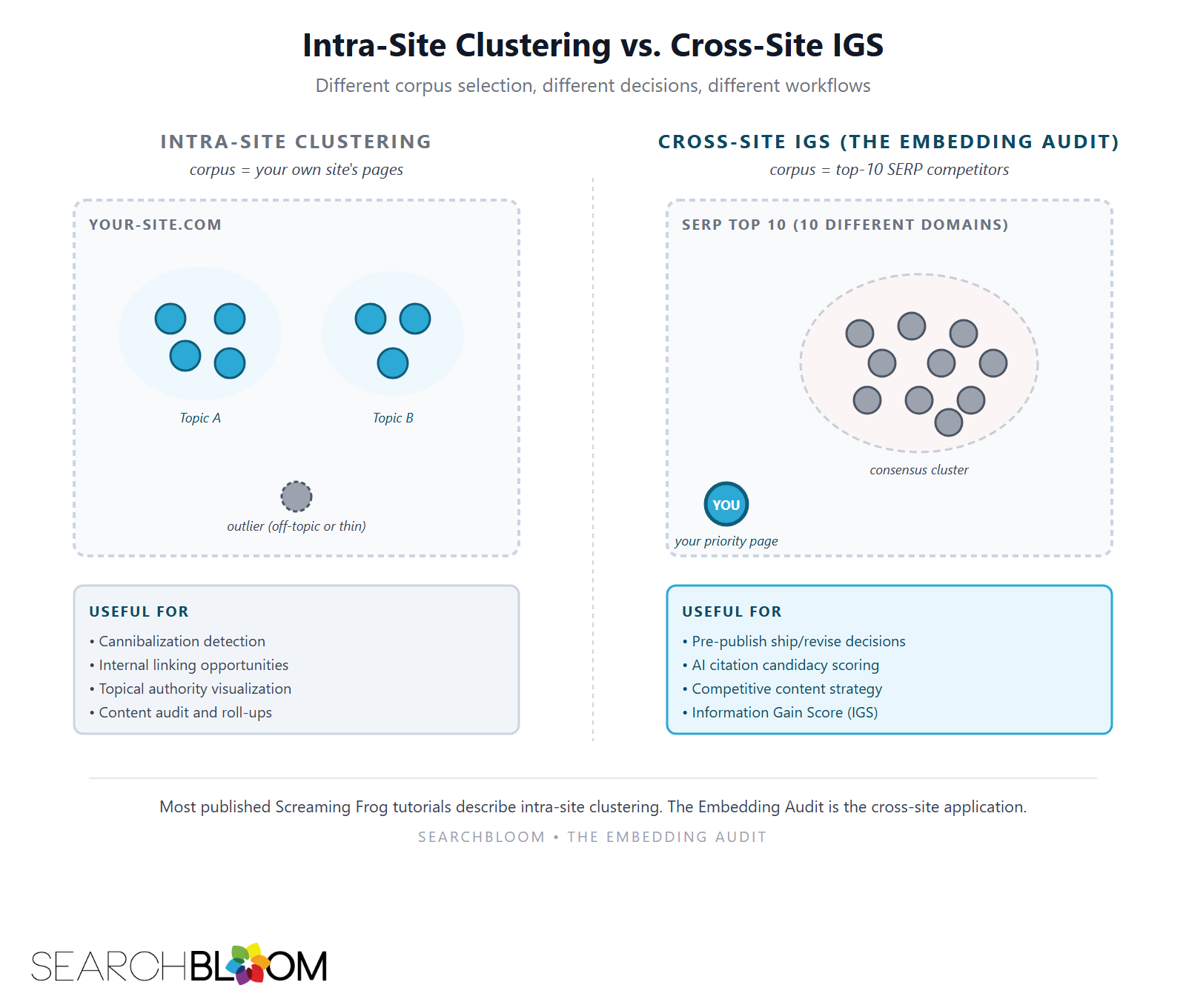

The catch is that nobody has written down how to do it. Look at the top-10 coverage of Screaming Frog and embeddings out there now. The official release notes. Mike King’s “Vector Embeddings Is All You Need.” Francesca Tabor’s content cluster tutorial. Ian Lurie’s content audit assistant. Moz’s internal linking piece. All of them describe intra-site applications. Cluster your own pages. Find linking opportunities within your own site. Audit your own content cannibalization. None of them does the cross-site comparison the IGS framework requires. This article is the operational walk-through of that cross-site workflow inside Screaming Frog. The method we call the Embedding Audit. The property the audit measures, which we call Embedding Strength.

The trilogy that introduced the framework lives at the parent article on Information Gain in SEO. The detailed pieces cover Information Gain Density (the substance count), Information Gain Score (the math and the embedding-model choice), and Vector Shift (the geometric framing). This piece is the tooling layer underneath them. How to run the math through the SEO tool 90% of operators already pay for.

TL;DR

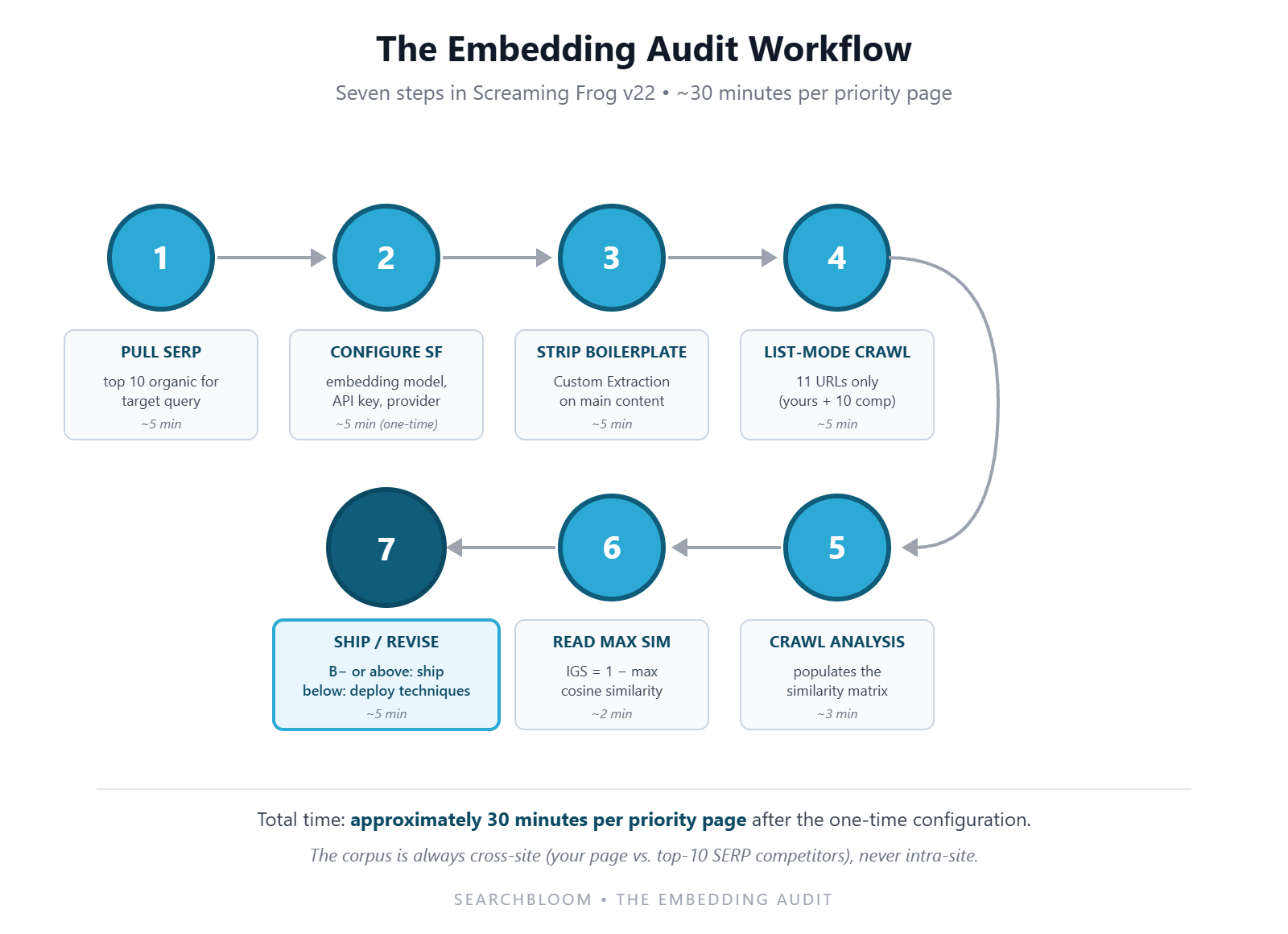

- The Embedding Audit is a named, repeatable workflow for computing IGS against the top-10 SERP competitors using Screaming Frog v22. Pull the SERP, run a list-mode crawl with embeddings enabled, read the Semantically Similar tab, take 1 minus the maximum similarity, map to letter grade, publish or revise.

- Embedding Strength is the property the audit measures. A page with high Embedding Strength has its embedding sitting outside the consensus cluster of competitors. That produces a high IGS and reliable AI citation candidacy. A page with low Embedding Strength sits inside the cluster and gets absorbed into AI synthesis without attribution.

- The load-bearing distinction the existing coverage misses: intra-site versus cross-site. Every published Screaming Frog embeddings tutorial does intra-site clustering, finding similarities among your own pages. The Embedding Audit does cross-site comparison, scoring your draft against the actual ranking competitors. They are different workflows for different decisions.

- The right embedding model in Screaming Frog is text-embedding-3-large for most teams (OpenAI default, $0.13 per 1M tokens, ~100ms latency). Switch to voyage-3 if cost matters at scale. Switch to a self-hosted model if data residency forbids API egress.

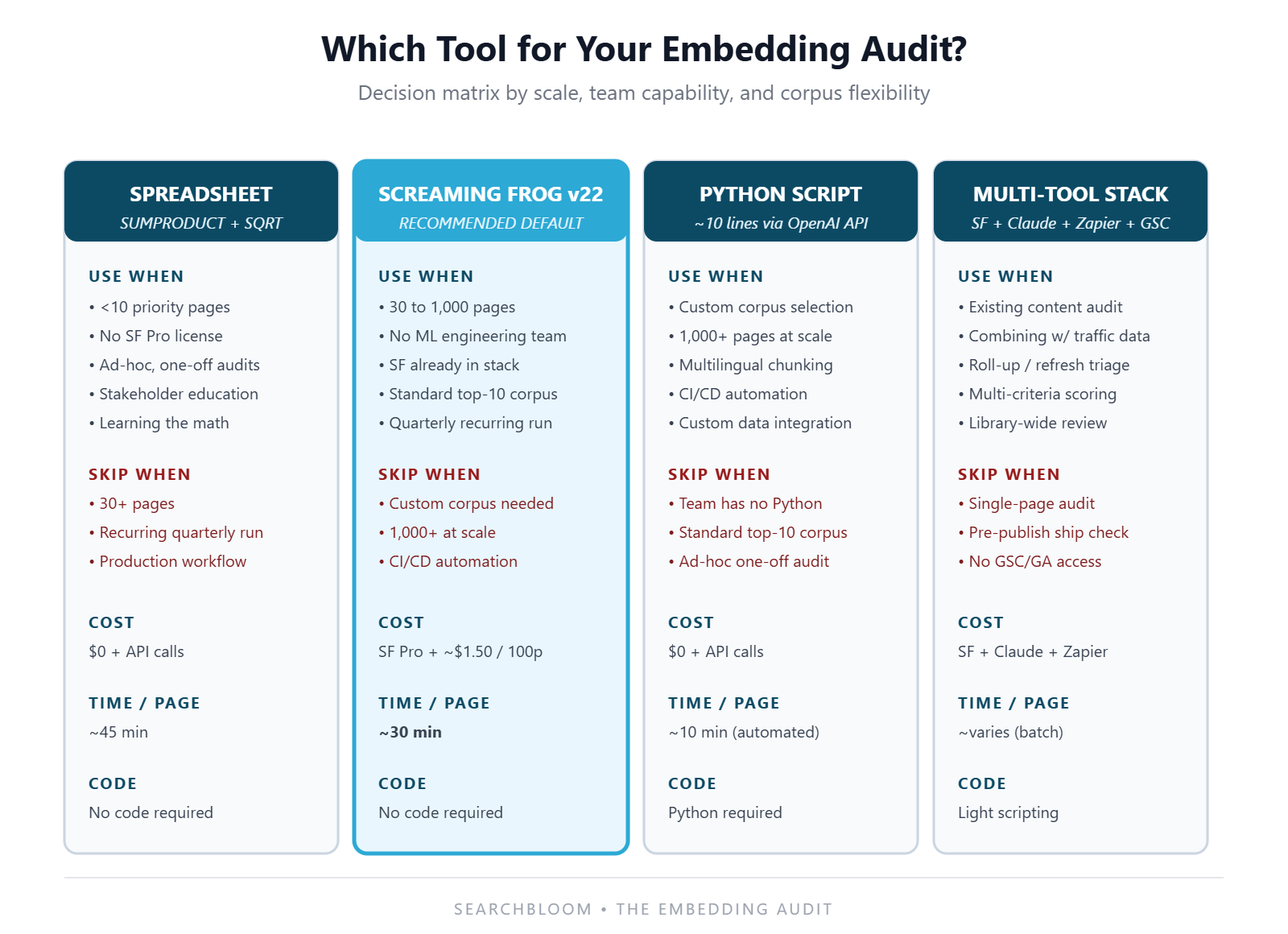

- Screaming Frog wins over Python for 30 to 1,000 priority pages at agencies and in-house teams without an ML engineer. Python wins for custom corpus selection, automation pipelines, or scoring beyond 1,000 pages where SF’s UI starts to bottleneck.

- Five named failure modes specific to Screaming Frog: boilerplate inclusion, long-page truncation, list-mode misconfiguration, JavaScript rendering surprises, and stale embeddings on re-crawls. Each has a known fix.

- Embeddings are necessary but not sufficient. Mike King’s “Vector Embeddings Is All You Need” framing oversells the standalone value. Embeddings measure differentiation. The substance work that produces differentiation is the Information Gain Density work the IGD spoke describes. Run the Embedding Audit. Deploy the 12 Information Gain Techniques to move the score. Re-audit.

Why Screaming Frog Matters Here

The honest reason this article exists: most operators will not write Python to compute IGS. The math is straightforward. The existing IGS spoke gives a ten-line code example. But a tool that already lives in the SEO workflow beats a Jupyter notebook every time when the goal is consistent application across every priority page on every engagement. Screaming Frog SEO Spider v22 published vector embedding capture as a built-in feature, with support for OpenAI, Anthropic, Google Gemini, Ollama, and custom endpoints. The licensing and the workflow are familiar to anyone who has run a technical SEO audit in the last decade.

The strategic implication: the same operational discipline the IGS spoke describes is now executable inside the existing technical SEO toolchain without any code at all. That includes the pre-publish IGS check, the calibration grid, publish at B- or above, and re-score quarterly. The tradeoff is configuration depth and corpus flexibility. The Python approach still wins on both for advanced cases. For the 80% of priority page lists at typical agencies and partner engagements, Screaming Frog is now the right tool.

What Screaming Frog v22 Actually Gives You

The release notes cover the feature in full. This section is a fast recap of what matters for the Embedding Audit:

- Vector embedding extraction via Config > Content > Embeddings, with provider selection (OpenAI, Anthropic, Google Gemini, Ollama, or a custom endpoint), API key entry, and model selection.

- The Semantically Similar tab, which surfaces near-duplicate and near-match pages within the crawl set with cosine similarity scores from 0 to 1. Default threshold is 0.95, configurable down to 0.5.

- Semantic Search, a query-driven search across the crawled set that returns ranked matches by embedding similarity rather than keyword presence.

- Content Cluster Diagram, the visualization that plots each URL in 2D embedding space and clusters semantically related pages together.

- Low Relevance Content Detection, which flags outlier pages that deviate from the centroid of the rest of the crawl, useful for spotting off-topic or thin content.

The Embedding Audit primarily uses the embedding extraction feature and the Semantically Similar tab. The Content Cluster Diagram is useful for visualization but not load-bearing for the score. The Semantic Search tab is useful for the second-order workflows (internal linking, keyword-to-page mapping) covered later.

The Gap: Intra-Site vs Cross-Site IGS

This is the load-bearing distinction the existing coverage misses. It is the reason the Embedding Audit exists as a named method rather than a recap of the SF release notes.

Francesca Tabor walks through Screaming Frog v22 to visualize topical authority. She crawls one site (yours), embeds every page on it, and uses the Content Cluster Diagram to show which pages cluster together and which sit outside the cluster as outliers. Ian Lurie audits existing content with Screaming Frog plus Claude. He embeds your pages and asks Claude to find opportunities (roll-ups, refreshes, gaps) within your own corpus. Moz writes about internal linking with vector embeddings. The implicit corpus is your own site’s pages. Every existing tutorial assumes the corpus is your own site.

That assumption is correct for the questions those tutorials answer. How is my own content organized. Where are my internal linking opportunities. Which of my pages cannibalize each other. It is wrong for the question the Information Gain Score answers. IGS is a cross-site comparison. The whole point of the score is to measure whether your draft is different from the actual top-10 ranking competitors for your target query. Not whether it is different from your other pages on the same topic.

The two workflows produce different decisions:

- Intra-site clustering tells you whether your own pages are saying different things from each other. Useful for cannibalization, internal linking, and topical coverage gaps. Says nothing about whether the SERP will pick your page over a competitor.

- Cross-site IGS tells you whether your draft is differentiated from the existing top-ranked content on your target query. Useful for pre-publish publish/revise decisions, AI citation candidacy, and competitive content strategy. Says nothing about how your page sits among your other content.

Most teams run intra-site embedding workflows in Screaming Frog and assume they are doing IGS. They are not. The Embedding Audit fixes the corpus selection, which is the entire game.

The Embedding Audit: A Seven-Step Workflow in Screaming Frog

The named method. About thirty minutes per priority page once the API configuration is in place, plus a one-time tool setup of about ten minutes.

Step 1: Pull the Top-10 SERP for Your Target Query

Use any SERP source that gives you ranking URLs. Ahrefs. Semrush. The SERP API in your preferred toolchain. A manual incognito search. Capture the URLs of the top 10 organic results for the exact query your priority page is targeting. Do not include People Also Ask snippets, ad results, AI Overview citations, or sitelinks. The corpus is the ten organic competitors.

If your priority page already ranks somewhere in the top 10, exclude it from the competitor list (you do not score against yourself) and substitute the page at position 11. The corpus is always 10 distinct competitor URLs that are not yours.

Step 2: Configure Screaming Frog v22 for Embedding Extraction

Open Screaming Frog. Navigate to Configuration > Content > Embeddings. Enable the feature. Select your provider. OpenAI is the default for most teams. Use voyage-3 via custom endpoint if cost matters. Use Ollama for self-hosted. Enter your API key.

Select the embedding model. Recommended defaults from the IGS spoke:

- text-embedding-3-large (OpenAI, 3,072 dimensions, $0.13 per 1M tokens) for the general default.

- voyage-3 (Voyage AI / Anthropic, 1,024 dimensions, $0.06 per 1M tokens) if cost matters at scale.

- BGE-M3 via Ollama or a custom endpoint (1,024 dimensions, free if self-hosted) if data residency rules out API egress.

Save the configuration. The model selection should be consistent across all your audits so scores are comparable.

Step 3: Strip Boilerplate from the Embedding Input

This step is what separates a useful Embedding Audit from a noisy one. It is the failure mode most teams encounter on their first run. Screaming Frog by default embeds the rendered HTML of each page. That includes site navigation, footers, sidebars, related-posts widgets, and any boilerplate that is identical across pages on the same site. Boilerplate pumps up cosine scores because every page on the same site shares it.

In Screaming Frog v22, the cleanest fix is to use the Custom Extraction feature. It pulls the main content area of each page (typically a CSS selector like article, main, or a content-specific class on each competitor’s site). Then configure embeddings to run against the extracted content rather than the full HTML. For mixed-source competitor sets where you cannot find a consistent selector, run two crawls: one for content selectors that match your own site, one with full-HTML embeddings as a fallback. Prefer the cleaner extraction when both are available.

The cost of skipping this step is real. We have measured cosine score inflation of 0.10 to 0.20 on partner audits where boilerplate was not stripped. That can flip a B+ page into a C+ on the letter grade scale. It produces a false negative on the publish decision.

Step 4: Run a List-Mode Crawl on the 11 URLs

Switch Screaming Frog from Spider mode to List mode via Mode > List. Paste in the eleven URLs: your priority page plus the ten competitors. Start the crawl.

List mode is critical here. Spider mode would follow links and crawl whole sites. That is the wrong corpus for cross-site IGS, and it burns API credits on irrelevant pages. List mode crawls exactly the URLs you provide and nothing else.

If competitor pages render content via JavaScript, enable JS rendering under Configuration > Spider > Rendering. This costs more time and more API calls. It is non-negotiable for SPA-style competitor pages where the visible content is not in the initial HTML response.

Step 5: Run Crawl Analysis

After the crawl completes, run Crawl Analysis > Start. This populates the Semantically Similar tab with cosine similarity scores between every pair of URLs in the crawled set. For an 11-URL crawl, this produces a 10-by-10 similarity matrix relative to your priority page (your priority page versus each of the 10 competitors).

Step 6: Read the Maximum Similarity and Compute IGS

Click on your priority page in the URL list. Open the Semantically Similar tab in the bottom panel. Screaming Frog displays the other URLs in the crawl ranked by cosine similarity to your page. The score is shown for each.

The maximum similarity is the first entry in the list. Read that number. Subtract it from 1.

That difference is your Information Gain Score.

Worked example. If the closest competitor matches your priority page at 0.71 cosine similarity, your IGS is 1 minus 0.71, which is 0.29. On the 13-grade letter scale from the IGS spoke, that is an F. The page is in the absorbed tier and should not publish. If the closest competitor matches at 0.42 cosine, your IGS is 0.58. That is a B and clears the citation threshold.

Step 7: Decide Publish, Revise, or Re-Engineer

Map the IGS to the calibration grid from the IGS spoke. Find your row (saturation level of the topic) and your column (your site’s authority). The grid cell tells you the target IGS for the engagement.

- Above the target IGS: publish.

- At or just below: revise. Identify which competitor is your closest match, read their page section by section against yours, and deploy two or three of the 12 Information Gain Techniques to push your embedding away from theirs.

- Far below (more than 0.10 below target): re-engineer. The page is structurally too similar to consensus content. Add a contrarian framing, a proprietary stat, an SME quote, a named failure mode. Re-run the audit after the revision.

The audit becomes a quarterly rhythm rather than a one-time check. Re-score every priority page on a 90-day cadence and watch for drift as the SERP saturation set evolves.

Embedding Strength: The Property the Audit Measures

The Embedding Audit produces a number (IGS) and a letter grade. Underneath both is the qualitative property we call Embedding Strength. This is the editorial language that travels well in non-technical conversations. A page has high Embedding Strength when its embedding sits outside the consensus cluster of competitors, and low Embedding Strength when it sits inside the cluster.

The reason to coin the term distinct from IGS is that IGS is a number, and numbers do not travel well in editorial discussions with non-technical stakeholders. Saying “this page scored 0.42 IGS” requires the listener to know the formula, the threshold, and the letter grade scale. Saying “this page has weak Embedding Strength” travels naturally to a content director, a partner stakeholder, or an editor without any of that context. The number is for the audit. The property is for the conversation.

In the editorial workflow we use at Searchbloom, every priority page carries an Embedding Strength rating in the content brief: weak, borderline, strong, or dominant. Those map cleanly to the IGS letter grades (Absorbed, Modest, Meaningful, Substantial). But the brief and the editorial review never use the underlying numbers. The audit produces the number. The brief carries the property. The publish decision references the property.

Three operational implications of treating Embedding Strength as the unit:

- Editorial training becomes possible. Editors learn to recognize weak, borderline, strong, and dominant content by reading the page rather than running an audit. They build the skill the same way they learn to recognize good and bad headlines without measuring CTR. The audit confirms the read. The read becomes faster.

- Cross-team conversations stay grounded. A content strategist, a partner SME, and a designer can discuss “this page needs stronger Embedding Strength before launch” without the conversation requiring an explanation of cosine similarity.

- The property compounds across pages, not just within them. A site with strong Embedding Strength across its priority page list builds topical authority and earns citation share on a category level. Not just on individual queries. The audit is per-page. The property is per-program.

When Screaming Frog Wins Versus Python Versus Spreadsheet

The IGS spoke describes three ways to compute the score. Spreadsheet (no code). Python (ten-line script). Production pipeline. Screaming Frog v22 is a fourth path. The right choice depends on scale, team makeup, and corpus flexibility.

Screaming Frog Wins When

- Priority page list is between 30 and 1,000 pages.

- Team has no Python or ML engineering capacity.

- SEO workflow already runs through Screaming Frog (technical audits, log file analysis, schema validation).

- You need integrated reporting (the Embedding Audit results live in the same project as the rest of the technical SEO data).

- The corpus is straightforward: top-10 organic ranking pages, English language, standard HTML rendering.

Python Wins When

- You need custom corpus selection (specific competitors not in the top 10, AI Overview citation set rather than organic, multilingual sets requiring per-language embedding models).

- You are running 1,000+ pages and need automation, scheduling, or CI/CD integration.

- You need fine-grained chunking on long pages (Screaming Frog truncates above the model’s input window; Python lets you embed by H2 section and average).

- You are integrating the score with other custom data (CRM, partner-specific pricing, internal performance dashboards).

- You need code-level reproducibility for a research project, a paper, or a regulated environment.

Spreadsheet Wins When

- Priority page list is under 10.

- You do not have a Screaming Frog Pro license and the audit is ad-hoc.

- You are learning the method and want to see the math step by step.

- You are explaining IGS to a stakeholder and need the calculation visible cell by cell.

Lurie’s Multi-Tool Stack Wins When

The audit needs to combine Embedding Strength with traffic data, GSC performance, and editorial criteria beyond differentiation. Ian Lurie’s content audit assistant workflow (Screaming Frog plus Claude plus Zapier MCP plus GSC plus GA) is the right tool when the question is “which existing pages should I roll up, refresh, or kill.” Not “should this draft publish today.” The two questions are different. The Embedding Audit is for publish/revise on a single priority page. Lurie’s stack is for triage across an existing content library.

The Practical Default

For most Searchbloom partner engagements, the priority page list runs 30 to 100 pages. The team is non-technical on the SEO side. Screaming Frog Pro is already in the toolchain. So Screaming Frog is the practical default. We escalate to Python when the corpus is unusual (multilingual, AI-Overview-citation-based) or the volume crosses 1,000 pages. We use the spreadsheet method only for ad-hoc one-off audits or stakeholder education.

Five Failure Modes Specific to Screaming Frog

Each one is a real pattern we have seen on partner audits. Each has a known fix.

Failure 1: Boilerplate Inclusion

The default Screaming Frog embedding extraction runs against the full rendered HTML. So navigation, footer, sidebar, and template content all contribute to the embedding. Two pages on the same site share boilerplate. Two pages from different sites do not. Within-site cosine scores get pumped up. Cross-site cosine scores can get pushed down if competitor pages have different boilerplate from your own.

For the Embedding Audit, where the corpus is cross-site by design, the issue is that competitor pages with identical boilerplate to each other (Amazon-style listicle templates, for example) cluster artificially tight. That lowers your apparent IGS against any one of them. Fix: use Custom Extraction to pull main content only. Then configure embeddings to run against the extracted text. We have measured 0.10 to 0.20 cosine score inflation on audits where this was skipped.

Failure 2: Long-Page Truncation

OpenAI’s text-embedding-3-large accepts up to 8,191 input tokens. Voyage-3 accepts up to 32,000. BGE-M3 accepts up to 8,192. Long-form competitor pages get truncated at the model’s limit. Pillar pieces of 10,000 words. Big guides. Long reference pages. Only the first portion of the page contributes to the embedding. The cosine similarity you measure is against the first 6,000 to 25,000 words of the competitor. Not the full page.

For most priority page audits this is fine. The leading content usually captures the page’s main semantic content. For deep-tail audits where the competitor’s differentiating substance lives in later sections, the truncation produces a false-low IGS (your page looks more different than it actually is). Fix: switch to voyage-3 for the longest competitor sets, or chunk-by-section in Python and average.

Failure 3: List-Mode Misconfiguration

Operators new to Screaming Frog often default to Spider mode for the Embedding Audit. That then crawls each competitor URL and follows internal links across all 10 competitor sites. The corpus balloons from 11 URLs to several thousand. The API costs escalate. The resulting cosine similarities are computed against an enormous and irrelevant set rather than the 10 competitor pages.

Fix: switch to List mode (Mode > List) before pasting in the URLs. Confirm the crawl is set to crawl exactly 11 URLs before running. Watch the URL queue counter during the crawl. If it grows past 11, the configuration is wrong.

Failure 4: JavaScript Rendering Surprises

Modern competitor pages often render their main content via JavaScript after the initial HTML loads. Newer SaaS marketing sites and product pages especially. Screaming Frog’s default crawl returns the raw HTML response. That may contain only a navigation skeleton and a placeholder for the main content. The embedding gets computed against the skeleton and produces meaningless similarity numbers.

Fix: enable JavaScript rendering under Configuration > Spider > Rendering > JavaScript. The crawl takes longer and costs more API credits. But the embeddings are computed against the actual visible content. For competitor sets that mix server-rendered and JS-rendered pages, turn on JS rendering by default. The cost of running it on server-rendered pages is small compared to the cost of bad embeddings on JS-rendered ones.

Failure 5: Stale Embeddings on Re-Crawl

On re-crawls of the same URL set, Screaming Frog may serve cached embeddings rather than rebuilding them against fresh content. This happens especially if the source pages have not changed but the competitor list has. The audit returns an IGS based on stale data, which produces drift in re-scoring rounds.

Fix: clear the embedding cache before re-running quarterly audits. The Crawl Analysis re-run does not always wipe cached embeddings. Explicit cache clearing is the safer default. If you suspect competitor pages have updated, force a re-fetch. Clear the URL from the crawl history before re-running.

Beyond IGS: Other Embedding-Driven Audits in Screaming Frog

The Embedding Audit is the cross-site application. The same Screaming Frog feature supports a family of intra-site applications that the existing tutorials cover well. Brief routing for each:

- Topical authority visualization. Use the Content Cluster Diagram on a full-site crawl. Pages that cluster tightly around a topic center show coverage. Pages that sit outside the cluster as outliers are off-topic or thin. Francesca Tabor’s tutorial is the cleanest seven-step walkthrough.

- Internal linking opportunities. Use the Semantic Search tab against your own site. Find pages with high topical similarity that are not currently linked to each other. Moz has covered this for the cosine-similarity-based internal-linking workflow.

- Content audit and roll-up identification. Combine Screaming Frog’s embeddings with traffic data and an AI assistant. Ian Lurie’s audit assistant workflow is the best published version. Screaming Frog plus Claude plus Zapier MCP plus GSC plus GA. Custom criteria live in an opportunities.md file.

- Cannibalization detection. Use the Semantically Similar tab on a full-site crawl. Set the threshold around 0.80. That flags near-duplicate pages competing for the same intent.

- Redirect mapping. When migrating or consolidating, use Semantic Search to find the closest semantic match for each retiring page among the surviving pages. That gives you a starting list for 301 mapping.

None of these is the Embedding Audit. They are different workflows for different decisions. The point of naming the Embedding Audit clearly is that operators reading the existing tutorials often think they have run the audit when they have actually run a topical authority visualization or a cannibalization check. The corpus selection (cross-site versus intra-site) is the entire game.

Embeddings Are Necessary But Not Sufficient

This is where the Searchbloom position diverges from the dominant framing of vector embeddings in 2026 SEO content. Mike King’s “Vector Embeddings Is All You Need” is the most-cited piece in the space. The framing in the title is doing real work in the SEO conversation. King’s central claim has three parts. Vector embeddings are now more important to SEO than the link graph. Google’s ranking system has shifted from lexical matching to semantic matching. The practitioners who understand embeddings will outperform the ones who do not.

He is right on the first two claims. The third is where Searchbloom’s position diverges. Embeddings are necessary but not sufficient. They are a measurement instrument, the same way Google Analytics is a measurement instrument for traffic. Running an embedding-aware SEO program without the substance work that produces differentiation is the same mistake as obsessing over GA dashboards without producing content that earns the traffic.

The substance work is the Information Gain Density work. Specifically: the 12 Information Gain Techniques. They cover proprietary aggregate data. First-hand case studies. SME quotes. Failure documentation. Named failure modes with cohort detail. Pricing transparency. Process transparency. Operational artifacts. Decision frameworks. Verbatim customer language. Contrarian framings. Cross-domain synthesis. These are the moves that produce embedding shift, the geometric framing the Vector Shift spoke covers. The embedding measures whether the moves worked. The moves are what move the embedding.

The framing that matters: embeddings + IGD + conventional ranking signals + editorial discipline. Drop any of the four, and the program loses its edge. King’s emphasis on embeddings is correct as a corrective to the lexical-only framing of 2010s SEO. It is incorrect as a complete program. Some operators run an Embedding Audit, see a low IGS, and edit for synonym variation rather than add net-new substance. That produces gamed Vector Shift that decays as embedding models improve. The Vector Shift spoke covers the earned-versus-gamed distinction in detail.

The right operational read: use the Embedding Audit to measure where you stand, use the 12 Techniques to move the score, use the audit again to confirm the move was real. The audit is not the work. The audit measures the work.

Frequently Asked Questions

What is an Embedding Audit?

The Embedding Audit is a Searchbloom-coined method for computing the Information Gain Score against the actual top-10 SERP competitors for a target query. It uses Screaming Frog v22 (or any equivalent embedding-extraction toolchain). It is a cross-site, pre-publish workflow. It takes about thirty minutes per priority page. It produces a single number (IGS) plus a letter grade. B- or higher means publish. Below means revise. It is different from intra-site embedding workflows like topical authority visualization or content cannibalization detection.

What is Embedding Strength?

Embedding Strength is the qualitative property the Embedding Audit measures. A page has high Embedding Strength when its embedding sits outside the consensus cluster of top-ranking competitors and earns reliable AI citation candidacy. Low Embedding Strength means the page sits inside the cluster and gets absorbed into AI synthesis without attribution. The four working tiers are weak, borderline, strong, and dominant, mapping to the IGS letter grade scale.

Do I need Screaming Frog v22 to run an Embedding Audit?

No. The audit can run on any toolchain that extracts vector embeddings. Python via the OpenAI or voyage-3 API. A spreadsheet with embeddings pasted from an external API call. Ian Lurie’s multi-tool stack. Screaming Frog v22 is the recommended default for 30 to 1,000-page priority lists at agencies and in-house teams without ML engineering capacity. It folds the audit into an existing SEO workflow without code.

What is the difference between Screaming Frog’s Semantic Similarity feature and the Information Gain Score?

Screaming Frog’s Semantic Similarity feature computes pairwise cosine similarity between every pair of URLs in a crawl. The Information Gain Score is a specific use of those similarity values. It is 1 minus the maximum cosine similarity to a defined competitor set. Screaming Frog gives you the raw similarity values. The Embedding Audit sets the corpus (top-10 SERP competitors), the aggregator (max), and the read-out (1 minus max, mapped to the letter grade scale).

Can Screaming Frog do cross-site IGS scoring out of the box?

Yes, with two configuration choices that the existing tutorials do not document. First, switch from Spider mode to List mode. Paste in your priority page URL plus the ten competitor URLs. Second, configure Custom Extraction to pull main content area selectors so boilerplate does not pump up cosine scores. Once those are in place, the Semantically Similar tab reads as the IGS calculation directly.

Which embedding model should I configure in Screaming Frog?

For most teams, text-embedding-3-large from OpenAI at $0.13 per 1M input tokens. Switch to voyage-3 at $0.06 per 1M tokens if you are running 1,000+ priority pages and cost matters. Switch to a self-hosted model (BGE-M3 via Ollama) if data residency forbids API egress. Skip BERT, RoBERTa, and pre-2024 transformer models. Their CLS-token embeddings perform worse on retrieval tasks and produce noisier IGS scores.

How much does a 100-page Embedding Audit cost in Screaming Frog?

About $1.50 per round at text-embedding-3-large rates (100 pages plus 10 competitors per page averaging 1,500 tokens each, billed at $0.13 per 1M tokens). Quarterly re-scoring across the same 100 pages runs about $6 per year. The Screaming Frog Pro license is the larger cost, but most teams running this workflow already have it for technical SEO audits.

Can I run an Embedding Audit without Screaming Frog?

Yes. The IGS spoke covers the three other paths in detail: a Python script (about ten lines using the OpenAI API), a spreadsheet method using SUMPRODUCT and SQRT formulas, and a production pipeline for at-scale automation. Screaming Frog is the recommended default for the operational scale most agencies and in-house teams run at. It is not the only valid path.

How does the Embedding Audit relate to the Information Gain Score?

The Embedding Audit is the workflow. The Information Gain Score is the formula and the resulting number. Embedding Strength is the qualitative property the score measures. The audit produces the score. The score implies the property. The property guides the editorial choice. Read them as a stack. The workflow (Embedding Audit) produces a number (IGS). The number maps to a property (Embedding Strength). The property drives a decision (publish or revise).

How does this compare to Mike King’s vector embeddings approach?

King’s piece is the most-cited published treatment of embeddings in SEO. It is correct on the central claim that embeddings now matter more than the link graph for AI search ranking. Where Searchbloom’s position diverges is the “is all you need” framing in his title. Embeddings are necessary but not sufficient. The Embedding Audit measures differentiation. The substance work that produces differentiation is the Information Gain Density work the IGD spoke describes. Run the audit. Deploy the 12 Techniques to move the score. Re-audit. The audit is not the work. The audit measures the work.

What about Ian Lurie’s content audit workflow?

Lurie’s content audit assistant workflow is the right tool for a different question. He combines Screaming Frog plus Claude plus Zapier MCP plus GSC plus GA. The goal is to triage an existing content library. Which pages should we roll up, refresh, kill, or ignore. The Embedding Audit is for the publish decision on a single priority page rather than the triage decision across many. The two workflows complement each other rather than competing.

How often should I re-run an Embedding Audit?

Quarterly is the right default for priority pages. Competitors update their pages. New entrants appear. The SERP saturation set drifts. Monthly re-runs add noise from SERP volatility without catching real shifts faster. Annual runs are too slow. We have measured IGS drift of 0.10 to 0.15 within a single quarter on competitive topics. Schedule the re-run as part of the quarterly priority-page review.

Where does this workflow fit inside the MERIT Framework?

The Embedding Audit runs the measurement layer of the MERIT Framework. It produces the IGS that the Transformation pillar tracks over time. It surfaces the differentiation gaps that the Evidence pillar fills via the 12 Information Gain Techniques. It does not directly address the Mentions, Relevance, or Inclusion pillars. Those need separate workflows (earned media tracking, structural audits, technical SEO). The audit is one instrument in the full framework, not the framework itself.

The Bottom Line

The Embedding Audit is the named method for computing the Information Gain Score against the actual top-10 SERP competitors using Screaming Frog v22 or any equivalent toolchain. Embedding Strength is the qualitative property the audit measures, expressed as weak, borderline, strong, or dominant. IGS is the formula underneath both. The cross-site corpus selection is the load-bearing distinction the existing Screaming Frog embedding tutorials miss. Intra-site clustering and cross-site IGS are different workflows for different decisions. Mixing them up produces publish decisions that look right and perform wrong.

For the broader framework, the parent article on Information Gain in SEO covers the survey-level synthesis. The dedicated pieces on Information Gain Density, Information Gain Score, and Vector Shift cover the count framework, the math, and the geometric framing respectively. This piece is the tooling layer underneath them. The audit is the work that connects all of it to the editorial workflow on Monday morning.